Written by Ioannis Mollas, Research Associate, School of Informatics, Aristotle University of Thessaloniki (AUTH)

ΑΙ is everywhere today! In our everyday lives and activities and most business sectors, it is considered standard practice. Businesses can now exploit machine learning (ML) for fraud detection, customer or document intelligence, forecasting, and many more.

However, when ML-enabled applications are deployed in critical situations affecting people’s lives, models’ accountability is essential. In these cases, models that are intrinsically interpretable have a significant advantage. Unfortunately, in practice, such models can not match the performance of more complex models. As a result, efficient methods for extracting interpretations from more complicated models are required. While enhancing model accountability by enabling interpretability, we can also transform any existing solution into a more informative and trustworthy one. Such complex ML systems can be accompanied by explanations about the entire procedure (global interpretations) or specific decisions they make (local interpretations).

“But how do we define interpretable machine learning? A machine learning model is interpretable when it can provide reasons for its actions.”

But how do we define interpretable machine learning? A machine learning model is interpretable when it can provide reasons for its actions. As discussed in the “white boxes” section below, there are models that are intrinsically interpretable. At the same time, there are other models, as shown in the “black boxes” section, that need additional techniques to be interpretable. However, interpretability comes at a cost because the models that typically perform well, in many cases, are not intrinsically interpretable.

Only a few ML models can provide this information inherently: white boxes, also known as transparent models. The most well-known transparent models are the decision trees, linear models like logistic or ridge regression, and statistical models like ARIMA. On the other hand, more complex models, which do not offer explanations intrinsically, are called black box models. Random forests, support vector machines, and neural networks are a few out of the many black box models.

Models as Boxes – “the trade-off”

A wide range of tasks are solved using machine learning models. We can distinguish these models between “interpretable” and “accurate” ones. Humans can understand interpretable models, our white box models, since they can easily provide reasons for their actions. Their predictive performance, however, is subpar. Our accurate black box models, on the other hand, are top-performing systems in various tasks. Nonetheless, because of their complexity, they cannot easily justify their predictions. As a result, the accuracy-interpretability “trade-off” emerges.

White Boxes – “the interpretable”

Most of the time, different transparent models offer different kinds of interpretations. The main transparent models we often use in industrial applications are decision trees, linear models, and Bayesian models.

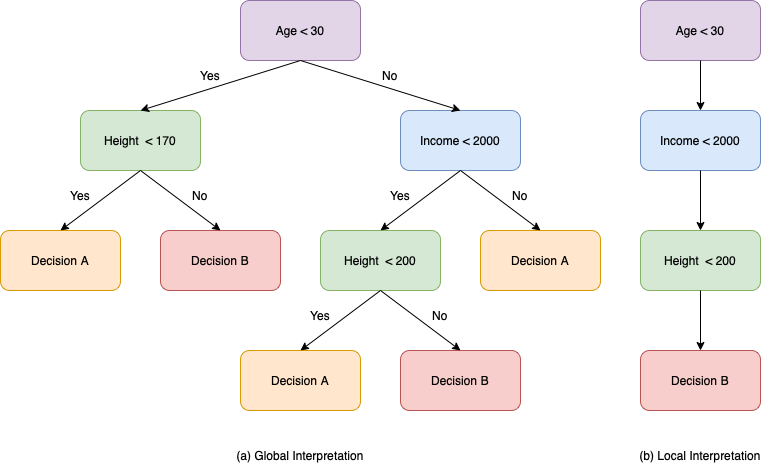

Let’s focus first on decision trees. Decision trees can provide interpretations for multiple scopes, both local and global. Globally, they can present a graph of the whole structure of the tree to the end-user (Figure 1a). On the other hand, for a specific decision of the tree, they can present a path from the root to the leaf as the local explanation (Figure 1b).

Considering the interpretability of linear models like logistic or linear regression, we can say that such algorithms are probably the most transparent and comprehensible. We can extract the weights assigned by these algorithms to our input features and understand how changes in the input affect the prediction. Therefore, we can extract the features’ importance at both the global and local levels. The linear relationship between a feature’s value and the final prediction makes these algorithms more comprehensible. For example, suppose we have two features, age and height, with importance values of 0.7 and -0.4, respectively (Figure 2). In that case, we know that increasing the age will lead to a higher prediction, while increasing the height would lead to a lower prediction.

There are several other models among them that can be interpreted under certain conditions. One example is k-nearest neighbour (k-nn), which is only interpretable when the number of neighbours and feature set size are both limited. We might try to explain 5-nn to the interested person by presenting the five neighbours, but imagine having a 100-nn. It’s not viable, right? Additionally, probabilistic models like Naive Bayes are also interpretable since we can interpret each feature’s importance through its conditional probability.

Decision rules, a technique similar to decision trees, can be interpreted locally and globally by displaying the set of rules used for a prediction or the entire list of rules learned. Finally, generalized linear models (GLMs) and generalized additive models (GAMs) are algorithms that achieve enhanced performance while retaining interpretability. Based on GAMs techniques, new interpretable algorithms have been introduced, like the explainable boosting machine (EBM), which is a cyclic gradient boosting GAM [Lou et al. (2013)].

Advantages

- Most of the time easy to interpret

- Low computational cost

- Compliance with legislation

- A plethora of tools and libraries

Disadvantages

- Low performance on unstructured data

- Worst performance compared to more complex models

- It might be difficult to comprehend (i.e., large input space or considerable depth in a tree)

Black Boxes – “the accurate”

While explainable, white box models cannot consistently produce high-performance results. When dealing with multidimensional inputs like 2D time-series data, word embeddings, and images, black box models tend to perform better than white box models. Moreover, we can see performance gain using black box models even with simple tabular data.

Ensembles like Random Forest, XGBoost, CatBoost, and AdaBoost appear to have high capabilities in solving tasks with tabular data. Even though the individual elements of an ensemble can be interpretable, the final model, due to its complexity, is incomprehensible.

On the other hand, tasks related to text, like text classification, recurrent neural networks, and, more recently, transformers, are paving the way towards fully automated systems thanks to their superior performance. Nevertheless, a significant drawback of such algorithms, which may be an obstacle to their deployment, is their uninterpretable nature. Multiple ways have been introduced to solve this issue, suggesting backpropagation of signals, or exploiting information from the attention weights for the recurrent networks and the transformers, respectively.

Recurrent networks are known to perform exceptionally well in time-series forecasting and predictive tasks. They are the only practical solution since simple white box models cannot handle two-dimensional multivariate time series data. As a result, we may have to sacrifice interpretability for tasks like electricity demand forecasting or predictive maintenance.

Finally, conventional ML algorithms cannot provide adequate performance for image-related tasks, particularly multimodal ones. Therefore, neural networks, specifically convolutional networks, are necessary to achieve desirable performance. Moreover, Generative Adversarial Neural Networks (GANs) and algorithms like YOLO and SSD accomplish an even better performance in image-related tasks.

Advantages

- Top-performing algorithms

- Handling unstructured data successfully

- Allowing multi-modal data

Disadvantages

- Most of the time are uninterpretable

- Resource demanding

- Not compliant with legislation

Why Do We Need IML?

Why do we need interpretability? ML, and AI systems, in general, have been integrated into several applications. However, sometimes these systems become unreasonable. Let’s examine two use cases where ML didn’t operate effectively and even created new problems.

Use case 1: Facial Recognition Fails to Authenticate People in Unemployment Systems

Due to the COVID-19 pandemic, many bureaucratic processes have shifted to digital applications. One of them, regarding the unemployment system of America, has also made the change to working online. When filling out an application, it is necessary to authenticate the person who fills it out, but doing it online is a bit more complicated because fraudulent actions, for example, impersonation, are more accessible. Therefore, a company implemented a facial recognition system to authenticate users and came to the rescue.

“In action, facial recognition fell into its biases. It didn’t manage to authenticate many users, refusing to give them unemployment benefits, causing economic problems for both individuals and the company while decreasing people’s trust in AI technologies.”

Regardless of the company’s statements about the perfect performance of its product (approximately 99.99% accuracy), the final product failed to serve its purpose. In action, facial recognition fell into its biases. It didn’t manage to authenticate many users, refusing to give them unemployment benefits, causing economic problems for both individuals and the company while decreasing people’s trust in AI technologies.

In this scenario, IML could help in two ways. First, a lightweight explanation provided to the end-user could help them identify what they did wrong in the authentication process. For example, if the hair was covering the face, the image was blurry, or half the face was depicted in the uploaded picture. Moreover, explanations could help the company test complex cases, identify why the model struggles or fails to authenticate individuals correctly, and take any action needed to fix the problem.

Use case 2: Facebook’s Automated Hate Speech Algorithm Failed to Tackle Anti-Muslim Content

Online racist and hateful activity has intensified because of the anonymity provided by social media platforms and their integration into people’s daily lives. Social media companies are legally obliged to address such issues as hate speech. Because the volume of unfiltered content exceeds any company’s human resources, automated ML algorithms have been developed and employed. However, algorithmic bias and instability are usually apparent in such automated systems.

Recently, a large class-action suit, which was filed against Facebook, has been settled. According to the suit, Facebook’s inability to better monitor hate speech on its platform leads to a growth of allowed anti-Muslim content. Hence, Muslim advocates support that this promotes anti-Muslim hatred and makes Muslims feel oppressed.

In this case, the algorithmic bias could have been detected and addressed using IML during the development phase. When algorithmic decisions are complemented with explanations and supplied to humans, it is simpler for them to decide to limit content. Additionally, when a user’s post is removed (either automatically or manually), an explanation can be offered to help them understand the reasons for this action. The user could then choose to republish the post after deleting the hate speech content or request a review if they believe the report is incorrect.

“We can now justifiably argue that IML offers benefits to end-users, businesses, and society overall, helping all three parties involved through different aspects”

We can now justifiably argue that IML offers benefits to end-users, businesses, and society overall, helping all three parties involved through different aspects:

- Make decision-systems accountable for their predictions

- Increase the trust level between people and AI systems

- Prevent economic loss to people and companies

- Avoid legal issues

- Prevent racist and discriminatory actions

- Allow debugging of ML systems

- Explore new knowledge as it occurred within a complex model

Interpretable Machine Learning and Applications

Can we possibly have the best of both worlds? The interpretability of the white box models and the performance of the black box ones? This is what IML methods are all about!

Given also that IML is a crucial component of every automated ML system, the research community has put a lot of effort on developing novel IML tools. This move has also been encouraged by sponsors (e.g., governments) through large research programs [Gunning & David (2019)].

As already introduced, an interpretation can be either local or global, rationalising a specific decision or the whole model structure. These techniques can be either model agnostic, therefore applicable to any model, or model-specific, applicable to particular models and architectures. Finally, the different interpretability techniques can be data-dependent or independent.

“Can we possibly have the best of both worlds? The interpretability of the white box models and the performance of the black box ones? This is what IML methods are all about!“

Interpretability applications in tabular data

Business-related sectors, like banking and insurance, often deal with predictive tasks using tabular data. While explainability is not always required, there are times when decisions directly impact people’s lives, and thus an explanation for their actions may be necessary. A healthcare facility’s ML-assisted system is a great example. Doctors and nurses consult such systems on patient treatment and admission decisions. Therefore, improper advice can lead to bad decisions, possibly resulting in casualties. While simple, interpretable ML techniques perform well to minimise errors and eventually costs, businesses tend to use more complex models to solve those tasks, like ensembles, which generally perform well in tabular data. Therefore, interpretability is a necessary property of such models.

A few techniques that apply to tabular data include LIME, Permutation Importance, LionForests and DefragTrees Throughout this section, a running example demonstrating the interpretations of the various techniques is presented. We will use the “Heart (statlog)” dataset, which includes features such as age, gender, chest pain, and maximum heart rate achieved, among others, to predict if a patient has a heart disease or not (indicated as ‘presence’ or ‘absence’ classes accordingly), using a Random Forest (RF) classifier. Accompanying material: Colab Notebook on Tabular Data.

LIME

LIME is a state-of-the-art, local, model-agnostic technique [Ribeiro et al. (2016)], which is partially data-independent as it has three variations for tabular, textual, and image data. Its mechanism is simple. First, it generates a few synthetic instances very similar to the examined instance, in our example, a patient’s record. This is called the “neighbourhood” where the examined instance belongs. LIME’s neighbourhood generation process differs depending on the data type.

For tabular data, LIME identifies the distribution for each feature and creates synthetic instances (neighbours) by adding noise to the examined instance. Then, it asks the complex, uninterpretable model (the RF in this case) for a prediction. It trains a linear, transparent model to extract the weights for each feature based on the predictions of the black box model for those instances. This technique gives the synthetically created instances and the original instance as input to this linear model, whereas the target variables are the predictions supplied by the black box model. As a result, this is a regression task. We get our final interpretation by extracting the coefficients from the linear model.

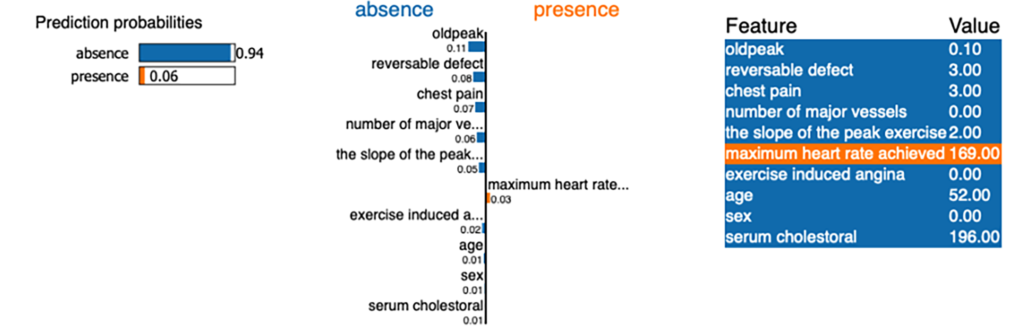

In Figure 3, we can see an example explanation as produced by LIME. For the chosen patient, the model concluded that heart disease is absent (the absence class has a probability of 0.94). The model has assigned blue and orange colours to the features to explain the prediction. Features with blue colour contribute to the ‘absence’ class, while features with orange colour contribute to the ‘presence’ class. Feature ‘oldpeak’ seems to be the most important feature, supporting the prediction of the ‘absence’ class.

Permutation Importance

Another technique applicable to tabular data is Permutation Importance (PI) [Breiman Leo (2001)]. PI is a data-independent, global, model-agnostic algorithm that may be used to provide a simple, baseline explanation. The concept is that we shuffle the values of one feature at a time from a set of instances and then assess how much the predictions change. Then, we assign importance scores to each feature based on the difference in the predictions. Similar to LIME, PI’s explanations are presented through bar plots, or in a table, like the one shown in Figure 4. At the top of the table are presented the most significant features with positive weights, whereas those at the bottom are the least important ones. Negative values indicate that the shuffling of a feature caused the predictions to become even more accurate than the real data! This is more common in datasets with only a few data.

DefragTrees

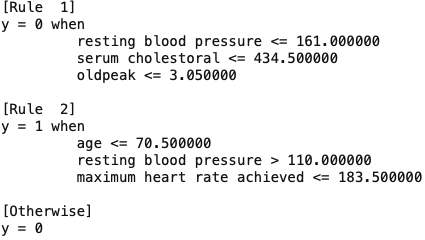

DefragTrees is a data-dependent, global, model-specific technique. It interprets tree ensembles by approximating the model’s output through a single, as simplified as possible tree [Satoshi and Hayashi (2018)]. When applying DefragTrees in our trained RF model, we acquire one simple decision tree which summarises the RF’s knowledge. Then, we can present all the rules of this decision tree. This is the global interpretation of our black box model. In Figure 5, we can see the ordered rules as extracted from the tree produced by DefragTrees. y=0 represents the ‘Absence’ class, while y=1 represents the ‘Presence’ class.

LionForests

LionForests is a data-dependent, local algorithm designed explicitly for explaining random forest models [Mollas et al. (2021)]. For an instance’s prediction, it collects all the paths from the ensemble that voted for that outcome, and after carefully reducing them, it aggregates them into a single IF-THEN rule. An example of a prediction’s interpretation using the “Heart (statlog)” dataset, utilised throughout this section, is presented below.

If 1.5≤chest pain≤3.5 & 161≤ maximum heart rate achieved ≤ 202 & 115≤resting blood pressure ≤143 & 179.5≤serum cholestoral≤199 & 48.5≤age≤55.5 then absence.

Figure 6. Interpretation provided by LionForests for the examined instance (i.e. patient)

Interpretability applications in text data

ML also has a massive impact on NLP applications. While statistical approaches were the first to be used for text classification and topic extraction, ML and deep learning models were introduced as better solutions to those tasks. Neural networks and transformer architectures perform exceptionally well in tasks like summarization and text classification. We will begin with interpretability techniques used in text classification, for example, hate speech detection. We are training a Transformer model (BERT) for the task of hate speech detection to predict short texts from social media platforms. The task is to decide whether they contain hate speech and be assigned “Hate Speech” or “Not Hate Speech” classes. Accompanying material: Colab Notebook on Textual Data.

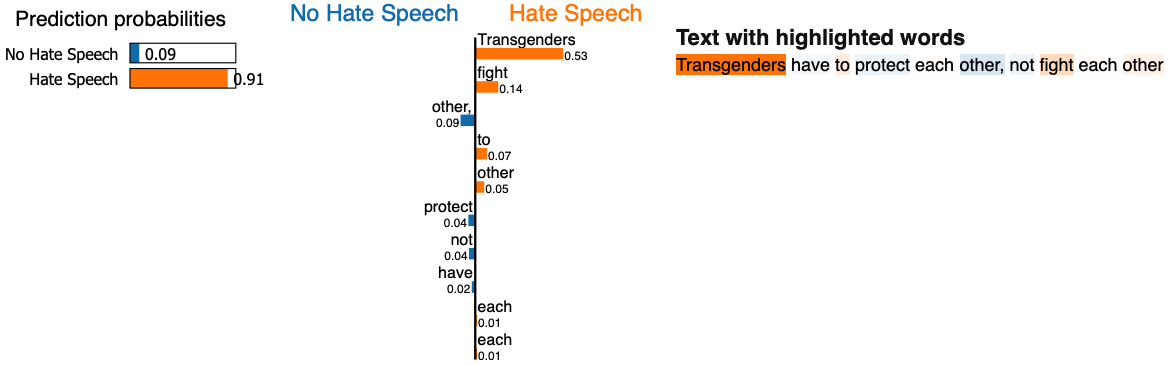

LIME

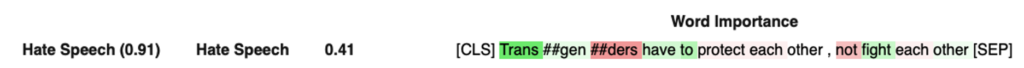

We have introduced LIME in the previous section, but in this section, we present its application to textual datasets. LIME only alters the neighbourhood generation process in textual tasks. To generate synthetic neighbours for a specific input (e.g., a document), it removes one or more terms at random until it has the necessary number of neighbours. The user specifies the latter. Its performance on textual datasets has been proven remarkably accurate. For a given sentence (“Transgenders have to protect each other, not fight each other”), it explains which words contributed the most to the predicted hate speech category (highlighted in orange). The transformer model predicted that this sentence contains “Hate Speech”. We know, however, that this sentence does not contain hate speech; instead, it is supportive of the transgender community.

According to the LIME’s explanation, the words “Transgender” and “fight” contribute the most to the predicted class. This probably means that the model, during training, received “Hate Speech” examples containing these words and therefore classified this example similarly. As a result of the explanation, we may debug the model by reviewing the training examples containing these words for any potential issues. For instance, the model must encounter examples with no hate speech content containing “transgenders” or similar other terms.

Anchors

Anchors, a technique very similar to LIME, is a data-dependent, model-agnostic, local technique [Ribeiro et al. (2018)]. It is applicable to textual and tabular data. This explainability technique provides a rule that “anchors” the prediction locally. Specifically, it presents which feature values must hold to (nearly) always have the same prediction, regardless of the changes (e.g., removal of a word) to the remainder of the instance’s feature values. Anchors suggests that if the words “Transgenders”, “not” and “other” exist in a sentence, then 92.9% of the time the model will predict “Hate Speech”. However, this interpretation may be wrong as it did not include the word “fight” which seems very relevant to the “Hate Speech” category. Anchors also present this information with a GUI (Figure 8).

Anchor: Transgenders & not & other then Hate Speech

Integrated Gradients

Integrated Gradients (IG) is a high-performing state-of-the-art local interpretability technique for neural networks (model-specific) [Sundararajan et al. (2017)]. Given the same sentence, IG extracts gradients for a set of interpolated samples between the examined instance and a baseline input by applying back propagation from the output to the input. It eventually produces importance scores for each input token by averaging the obtained gradients.

IG does not provide importance scores for each word but each token. Transformer models tend to tokenize words to increase their contextual information. This process replaces preprocessing like stemming. For example, the term “Transgender” was split into three tokens: “Trans”, “##gen”, and “##ders”, as seen in the example presented in Figure 9. Interestingly, IG assigned different importance scores to the word’s various tokens. With green, we can see the tokens which influenced the prediction of the “Hate Speech” class, while with red, the tokens that influenced the “No Hate Speech” class.

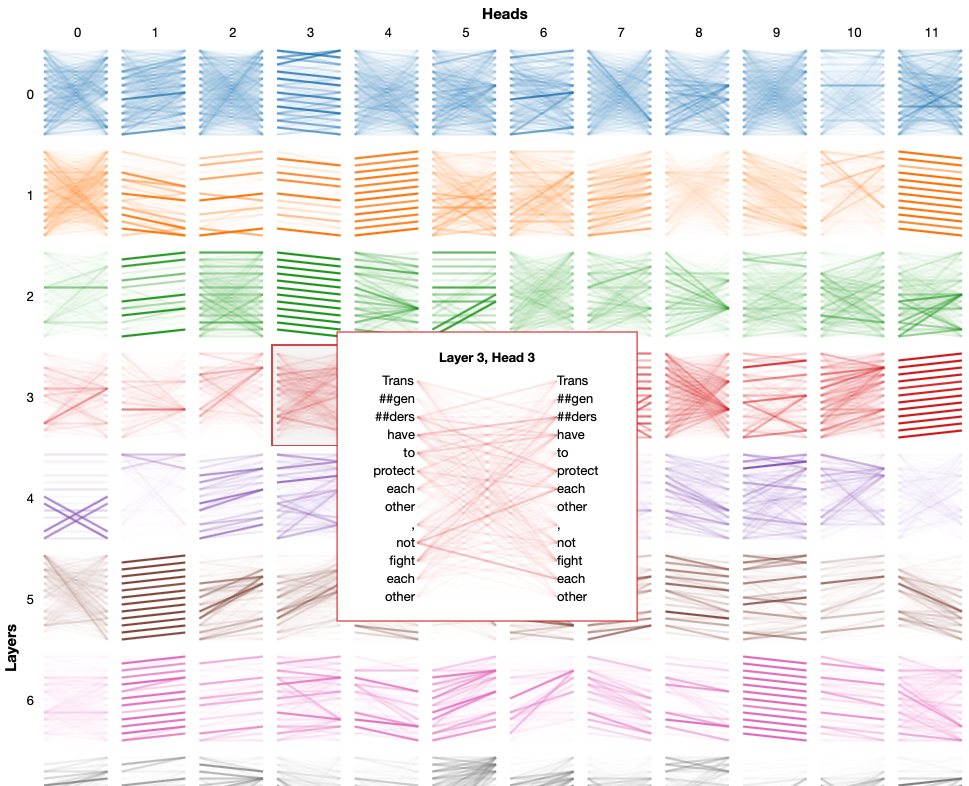

BertViz

BertViz [Vig (2019)] is another tool for this type of data. More specifically, it is a user-friendly tool for visualizing the attention of Transformers. This four-part tutorial introduces attention matrices. The visualization tool provides numerous views, each offering a unique perspective on the attention mechanism. This interpretation differs from the classic representations presented above. The attention matrix of each head inside each layer is depicted in the following figure (Figure 10) as an example. The relation of each word to another is presented by selecting a specific attention matrix.

Interpretability applications in image data

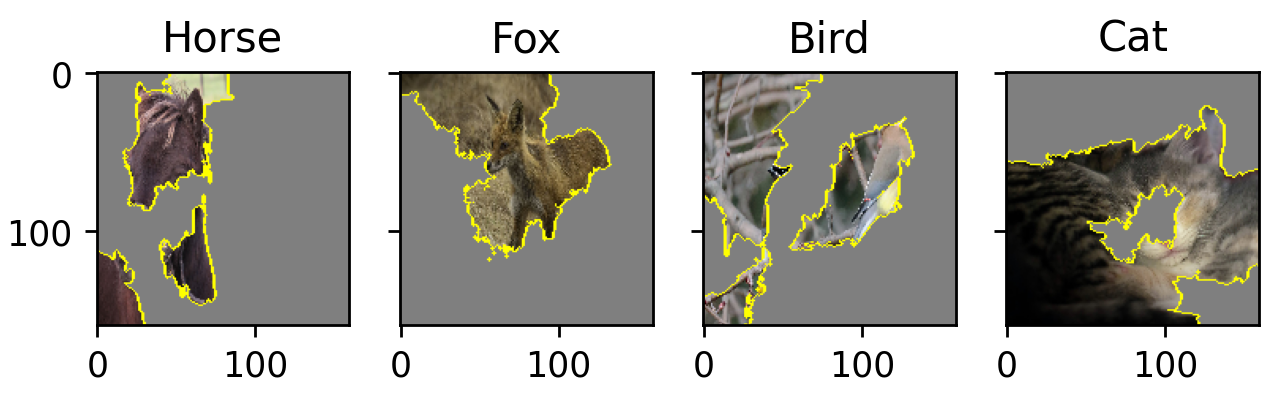

In images, most of the time, interpretability is presented using heatmaps of important parts of the input image. It is unusual to present a rule or a graph to explain an image-related decision. Most interpretability techniques rely on the back propagation functionality of neural networks. However, there are model-agnostic techniques we can apply as well. Let’s have a look at a few examples. We will use a pre-trained network called “MobileNetV2” as our “running” test case to classify four images of a horse, a fox, a bird, and a cat, as presented below (Figure 11). The classifier identified all of them correctly. Accompanying material: Colab Notebook on Image Data.

LIME

Let’s use LIME, our model-agnostic local interpretation technique, to identify the important parts of each image that led to the decision. In images, it works at the super pixel level. A “super pixel” is a group of pixels formed by applying an image segmentation technique.

LIME generates neighbours by greying out superpixels at random (one or more at a time). It trains a local model, similar to LIME’s tabular process, by constructing the desired number of neighbours (as defined by the user), where the input is a binary vector denoting the existence or absence (greyed out) of a superpixel and the output is the model’s prediction for each neighbour. In our example (Figure 12), we can see the superpixels identified as important for the predictions of the different images. The highlighted super pixels for the horse, fox, and cat are acceptable. However, we can see from the bird that the explanation is probably problematic.

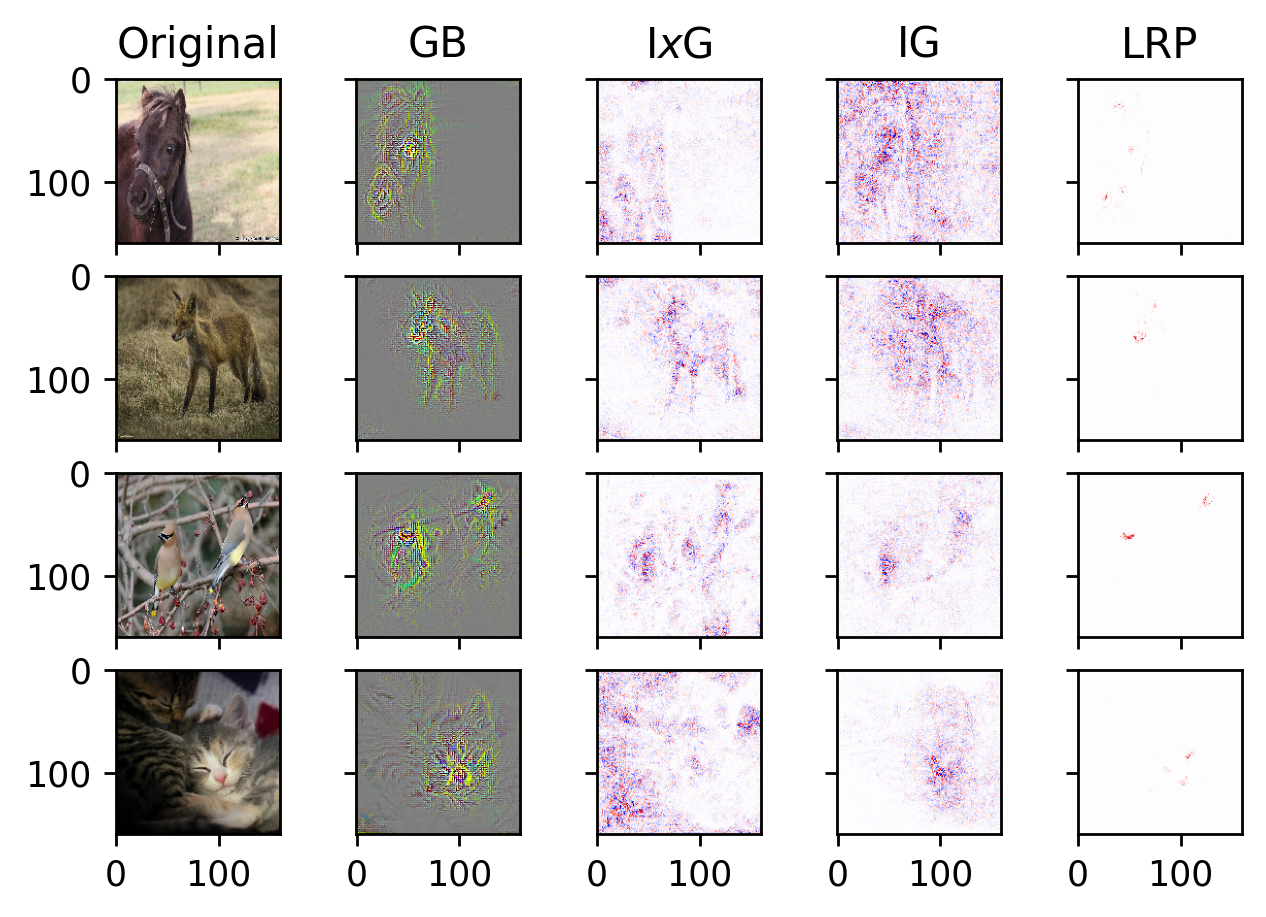

Other neural-specific techniques

While there are other techniques for providing explanations agnostically in image classification tasks performed by neural networks (e.g. RISE and Meaningful Perturbations), model-specific techniques tend to provide better explanations for the end user. We can apply numerous neural-specific techniques, but here we are utilizing four; three gradient-based and one using layer-wise relevance propagation, presenting all of their results in Figure 13.

InputxGradients (IxG) [Shrikumar et al. (2017)] and GuidedBackpropagation (GB) [Springenberg et al. (2014)] are back-propagating the output gradients to the input to identify the parts of it that influenced the model’s prediction. We already discussed Integrated Gradients (IG). IG needs a baseline to interpolate the examined instance. In image related tasks, we provide as baselines a totally white image, a totally black image or both. Finally, Layer-wise Relevance Propagation (LRP) is a significance-based approach that propagates relevance from the output layer to contributing neurons [Bach et al. (2015)]. The relevance scores returned by the layer above are collected by neurons. They propagate these scores into the previous layer by summing up these scores. We perform this recursively from one layer to the previous until we reach the input image, which provides us with the importance of each pixel.

Interpretability applications in time series

The time-series domain includes a variety of tasks. Time-series forecasting and time series prediction are two main tasks. Moreover, time series can vary between univariate and multivariate. The former is usually a single signal given in a time window. In this case, we have a one-dimensional input in our model. Traditional models can be employed, while recurrent neural architectures are also suitable.

Regarding their explainability, we treat univariate time series like tabular data. On the other hand, in multivariate time series data, we may have multiple signals across time (time window), and therefore our input will be two-dimensional. In that case, we usually cannot use our interpretation techniques directly. Accompanying material: Colab Notebook on Time Series Data.

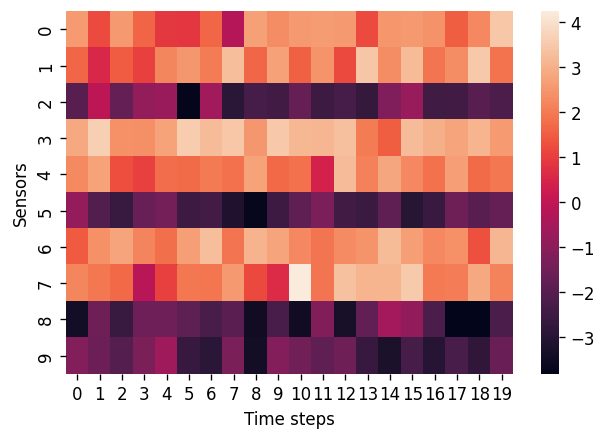

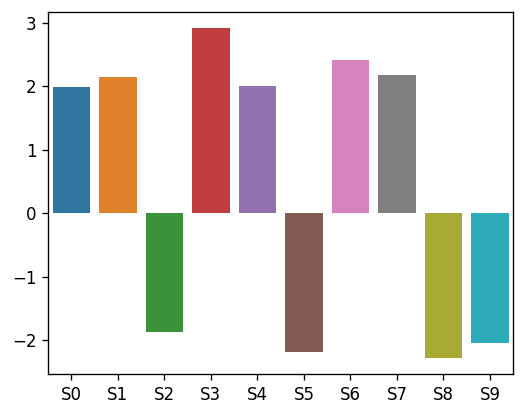

One very interesting topic related to time series is predictive maintenance. We will present an example of time series prediction in the task of predictive maintenance to identify the reasons behind the decisions. We developed a model that receives a time series signal as an input to demonstrate these techniques. This signal has 20 records (time steps) for a total of 10 separate sensors. As a result, the input is 10 x 20. Given the sensors’ measurements, we train a neural network to predict if a failure will occur in the next time step. In this example, we used the Turbofan engine degradation simulation dataset. Below, we can see an instance and its measurements across the different sensors for 20 time steps (Figure 14).

LIME

LIME will be used to interpret our time-series predictor once again. It cannot be directly applied to two-dimensional time-series data. As a result, to utilise LIME to explain a model that makes predictions based on 2D time-series data, we will provide it with a flattened version of the input.

Instead of giving our 10 x 20 example to LIME, we will provide a one-dimensional array of 200 values. Each time step of each sensor will be assigned an importance score by LIME. This information is represented as a heatmap in Figure 15a. Although, in this case, we have time steps defined per sensor as input to the predictor, we might as well define bigger time windows. A distinct presentation is required in these cases. One method is to average the importance scores of a sensor over all time steps and present them to the end user (Figure 15b). Therefore, we convert the explanation to the sensor level. This, however, may lead to incorrect interpretations, which must be evaluated.

LioNets

LioNets is a neural network-based local interpretation technique comparable to LIME [Mollas et al. (2022)]. It generates synthetic neighbours by utilising the neural network’s latent space. Inspired by auto-encoder neural architectures, from the trained model, an encoder is extracted.

In a manner similar to LIME’s tabular generation procedure, we encode the inspected instance and create synthetic neighbours using its latent representation. Then, all we need is a decoder to transform the generated neighbours into the original space. The technique then works similarly to LIME. It uses the predictions of the model for the neighbours and a linear model to extract the explanations. When the predictor and decoder are properly trained, LioNets can deliver more accurate explanations. The interpretations offered by LioNets are comparable to those provided by LIME, yielding a significance score at each time step for each sensor. In this example (Figure 16), however, we will show the importance scores of the most influential sensor, as well as the sensor values and their product.

Methods Summary

In the following table, we have summarized all the techniques discussed in this section, along with some of their properties.

| Technique | Scope | Applicability | Data dependency |

|---|---|---|---|

| LIME | local | model agnostic | partially independent (tabular, text, image and time series data) |

| Permutation Importance | global | model agnostic | tabular only |

| DefragTrees | global | tree ensembles | tabular only |

| LionForests | local | random forests | tabular only |

| Anchors | local | model agnostic | partially independent (tabular, text, image) |

| BertViz | local | transformer models | text only |

| Integrated Gradients | local | neural networks | tabular, text, image and time series data |

| InputxGradients | local | neural networks | tabular, text, image and time series data |

| GuidedBackpropagation | local | neural networks | tabular, text, image and time series data |

| Layer-wise Relevance Propagation | local | neural networks | tabular, text, image and time series data |

| RISE | local | model agnostic | image only |

| LioNets | local | neural networks | text and time series |

Evaluating Ιnterpretability

But how do we choose among these interpretability options, and how do we evaluate their ability to explain a machine learning model? While there is much work regarding interpretability techniques, there is limited research regarding their evaluation. The available evaluation methods for interpretability techniques can be categorized as functional or human-grounded [Doshi-Velez & Kim (2017)].

“But how do we choose among these interpretability options, and how do we evaluate their ability to explain a machine learning model?”

Evaluations with functional metrics

Metrics for the evaluation of interpretability techniques that require human involvement are called functional metrics. Different functional evaluation metrics have been designed concerning the output of an interpretability technique.

A common metric in techniques that provide feature importance or saliency maps is the number of non-zero weights. As it counts the number of weights of neutral importance (zero), this metric represents the complexity of an interpretation. Interpretations with many non-zero weights are less comprehensible since the end-user must examine more elements. Another metric is stability, also known as robustness [Alvarez and Tommi (2018)], which assesses how sensitive an interpretability technique is to minor changes in the input. An important family of evaluation metrics is faithfulness-based [Du et al. (2019)], which includes infidelity [Yeh et al. (2019)] and truthfulness [Mollas et al. (2020)] and simulates user behavior by removing or changing elements of the input to check whether the model’s prediction changes accordingly.

When ground-truth explanations, also known as rationales, are available, other metrics that can be employed are the classic ML scores like the F1 score and the AUPRC, which compare the produced explanation with the annotated explanations [DeYoung et al. (2019)].

The evaluation metrics differ slightly when dealing with interpretability techniques that produce rules as explanations. Coverage and precision metrics calculate the fraction of examples covered by a rule explanation and the ratio of accurately covered examples [Ribeiro et al. (2018)]. Another metric is the rule length, which reflects a rule’s complexity. Some users prefer larger rules to smaller ones and vice versa. It is also common in rule-based interpretability techniques to investigate their properties. For example, the conclusiveness [Mollas et al. 2021] property ensures that an explanation is correct, contains all the necessary elements, and is free of errors.

Human-grounded evaluation

“One can argue that the best evaluation methods for interpretability techniques involve real users.”

One can argue that the best evaluation methods for interpretability techniques involve real users. Surveys and questionnaires can offer the user many interpretations provided by various techniques for a specific example. Then, questions about the comprehension or preference of the different options are raised. Other tests, which solely provide the end user with the input and an explanation, ask the user to make a prediction. The interpretation technique is evaluated by comparing the user’s prediction to the model’s prediction. One sub-category of this evaluation approach is the application-grounded evaluation. These experiments involve expert users to assess interpretability applied in real-world tasks.

Even though those evaluation methods are crucial and necessary, they cannot be used on their own since users may have biases that lead to a deceptive or wrong assessment. Therefore, an unbiased assessment should combine human-centered evaluation with functional metrics.

Conclusions

In this article, we discussed the interpretability of machine learning models. First, we presented basic notions of interpretability, like white box and black box models, and a few examples of why interpretability is necessary. Finally, we showcased some interpretability techniques for black boxes applied in different data types, including tabular, textual, images, and time series.

The effects of establishing interpretability for machine learning-powered systems can impact both people’s lives and the industry. Regulating, debugging, and understanding the actions of numerous systems, such as self-driving cars or healthcare support systems, can prevent human loss and assist businesses in avoiding negative repercussions. Additionally, the users can trust the machine learning systems knowing they can be accountable for their actions. AI gets better with interaction, and interaction needs trust.

Medoid AI: Enabling IML for your AI

At Medoid AI we have put interepretability and explainanility at the heart of the AI solutions we deliver. Our signature requirements analysis process examines model-trust issues right from the design phase. This allows us to focus on the apporpriate IML methods that will complement the models we build and deliver. Does your organization have an already deployed model that you don’t trust, requiring time, effort and resources to melticusly examine its output all the time? Visit our website and contact us for help! The best ML model is the one you trust and understand.

Supporting Material (GitHub & Colabs)

- GitHub repository for this blog post

- [1] Colab Notebook on Tabular Data

- [2] Colab Notebook on Textual Data

- [3] Colab Notebook on Image Data

- [4] Colab Notebook on Time Series Data

References

- Gunning, David, and David Aha. “DARPA’s explainable artificial intelligence (XAI) program.” AI magazine 40.2 (2019): 44-58.

- Doshi-Velez, Finale, and Been Kim. “Towards a rigorous science of interpretable machine learning.” arXiv preprint arXiv:1702.08608 (2017).

- Ribeiro, Marco Tulio, Sameer Singh, and Carlos Guestrin. “Why should I trust you?” Explaining the predictions of any classifier.” (2016) Proceedings of the 22nd ACM SIGKDD. ACM.

- Breiman, Leo. “Random forests.” Machine learning 45.1 (2001): 5-32.

- Mollas Ioannis, Bassiliades Nick, and Tsoumakas Grigorios. LioNets: a neural-specific local interpretation technique exploiting penultimate layer information. Appl Intell (2022). https://doi.org/10.1007/s10489-022-03351-4

- Hara Satoshi, and Kohei Hayashi. “Making tree ensembles interpretable: A bayesian model selection approach.” International conference on artificial intelligence and statistics. PMLR, 2018.

- Mollas Ioannis, Nick Bassiliades, and Grigorios Tsoumakas. “Conclusive Local Interpretation Rules for Random Forests.” arXiv preprint arXiv:2104.06040 (2021).

- Ribeiro, Marco Tulio, Sameer Singh, and Carlos Guestrin. “Anchors: High-precision model-agnostic explanations.” Proceedings of the AAAI conference on artificial intelligence. Vol. 32. No. 1. 2018.

- Sundararajan Mukund, Ankur Taly, and Qiqi Yan. “Axiomatic attribution for deep networks.” International conference on machine learning. PMLR, 2017.

- Vig Jesse. “BertViz: A tool for visualizing multihead self-attention in the BERT model.” ICLR Workshop: Debugging Machine Learning Models. 2019.

- Shrikumar, Avanti, Peyton Greenside, and Anshul Kundaje. “Learning important features through propagating activation differences.” International conference on machine learning. PMLR, 2017.

- Springenberg, J. T., Dosovitskiy, A., Brox, T., & Riedmiller, M. (2014). Striving for simplicity: The all convolutional net. arXiv preprint arXiv:1412.6806.

- Bach, S., Binder, A., Montavon, G., Klauschen, F., Müller, K. R., & Samek, W. (2015). On pixel-wise explanations for non-linear classifier decisions by layer-wise relevance propagation. PloS one, 10(7), e0130140.

- Lou, Y., Caruana, R., Gehrke, J., & Hooker, G. (2013, August). Accurate intelligible models with pairwise interactions. In Proceedings of the 19th ACM SIGKDD international conference on Knowledge discovery and data mining (pp. 623-631).

- Alvarez Melis, David, and Tommi Jaakkola. “Towards robust interpretability with self-explaining neural networks.” Advances in neural information processing systems 31 (2018).

- Du, Mengnan, et al. “On attribution of recurrent neural network predictions via additive decomposition.” The World Wide Web Conference. 2019.

- Yeh, Chih-Kuan, et al. “On the (in) fidelity and sensitivity of explanations.” Advances in Neural Information Processing Systems 32 (2019).

- Mollas, Ioannis, Nick Bassiliades, and Grigorios Tsoumakas. “Altruist: argumentative explanations through local interpretations of predictive models.” arXiv preprint arXiv:2010.07650 (2020).

- DeYoung, Jay, et al. “ERASER: A benchmark to evaluate rationalized NLP models.” arXiv preprint arXiv:1911.03429 (2019).

Research Surveys on IML

- Adadi, Amina, and Mohammed Berrada. “Peeking Inside the Black-Box: A Survey on Explainable Artificial Intelligence (XAI).” IEEE Access, vol. 6, 2018, pp. 52138–60. DOI.org (Crossref), doi:10.1109/ACCESS.2018.2870052.

- Bibal, Adrien, and Benoît Frénay. “Interpretability of Machine Learning Models and Representations: An Introduction”. 2016.

- Burkart, Nadia, and Marco F. Huber. “A Survey on the Explainability of Supervised Machine Learning.” Journal of Artificial Intelligence Research, vol. 70, Jan. 2021, pp. 245–317. jair.org, doi:10.1613/jair.1.12228.

- Carvalho, Diogo V., et al. “Machine Learning Interpretability: A Survey on Methods and Metrics.” Electronics, vol. 8, no. 8, July 2019, p. 832. DOI.org (Crossref), doi:10.3390/electronics8080832.

- Biran, Or, and Courtenay Cotton. “Explanation and justification in machine learning: A survey.” IJCAI-17 workshop on explainable AI (XAI). Vol. 8. No. 1. 2017.

- Danilevsky, Marina, et al. “A Survey of the State of Explainable AI for Natural Language Processing.” ArXiv:2010.00711 [Cs], Oct. 2020. arXiv.org, http://arxiv.org/abs/2010.00711.

- Doshi-Velez, Finale, and Been Kim. “Considerations for Evaluation and Generalization in Interpretable Machine Learning.” Explainable and Interpretable Models in Computer Vision and Machine Learning, edited by Hugo Jair Escalante et al., Springer International Publishing, 2018, pp. 3–17. DOI.org, doi:10.1007/978-3-319-98131-4_1.

- Dosilovic, Filip Karlo, et al. “Explainable Artificial Intelligence: A Survey.” 2018 41st International Convention on Information and Communication Technology, Electronics and Microelectronics (MIPRO), IEEE, 2018, pp. 0210–15. DOI.org, doi:10.23919/MIPRO.2018.8400040.

- Du, Mengnan, et al. “Techniques for Interpretable Machine Learning.” Communications of the ACM, vol. 63, no. 1, Dec. 2019, pp. 68–77. DOI.org, doi:10.1145/3359786.

- Gilpin, Leilani H., et al. “Explaining Explanations: An Overview of Interpretability of Machine Learning.” 2018 IEEE 5th International Conference on Data Science and Advanced Analytics (DSAA), IEEE, 2018, pp. 80–89. DOI.org, doi:10.1109/DSAA.2018.00018.

- Guidotti, Riccardo, et al. “A Survey of Methods for Explaining Black Box Models.” ACM Computing Surveys, vol. 51, no. 5, Jan. 2019, pp. 1–42. DOI.org, doi:10.1145/3236009.

- Linardatos, Pantelis, et al. “Explainable AI: A Review of Machine Learning Interpretability Methods.” Entropy, vol. 23, no. 1, Dec. 2020, p. 18. DOI.org, doi:10.3390/e23010018.

- Murdoch, W. James, et al. “Interpretable Machine Learning: Definitions, Methods, and Applications.” Proceedings of the National Academy of Sciences, vol. 116, no. 44, Oct. 2019, pp. 22071–80. arXiv.org, doi:10.1073/pnas.1900654116.

Code Resources

- https://github.com/marcotcr/lime

- https://github.com/marcotcr/anchor

- https://github.com/eli5-org/eli5

- https://github.com/sato9hara/defragTrees

- https://github.com/intelligence-csd-auth-gr/LionLearn

- https://github.com/cdpierse/transformers-interpret

- https://github.com/jessevig/bertviz

- https://github.com/albermax/innvestigate

Generic IML Repositories

- https://github.com/rehmanzafar/xai-iml-sota

- https://github.com/lopusz/awesome-interpretable-machine-learning

Other Blogs

- Interpretable Time Series Forecasting: https://ai.googleblog.com/2021/12/interpretable-deep-learning-for-time.html

- Interpretability of NLP Models: https://medium.com/@kalia_65609/interpreting-an-nlp-model-with-lime-and-shap-834ccfa124e4 and https://ai.googleblog.com/2020/11/the-language-interpretability-tool-lit.html

- Interpretability of Image Classification Models:https://towardsdatascience.com/knowing-what-and-why-explaining-image-classifier-predictions-680a15043bad

- LIME Explained in Depth: https://towardsdatascience.com/understanding-how-lime-explains-predictions-d404e5d1829c

- Interpretability Blog: https://blog.ml.cmu.edu/2020/08/31/6-interpretability/

- Interpretability Book: https://christophm.github.io/interpretable-ml-book